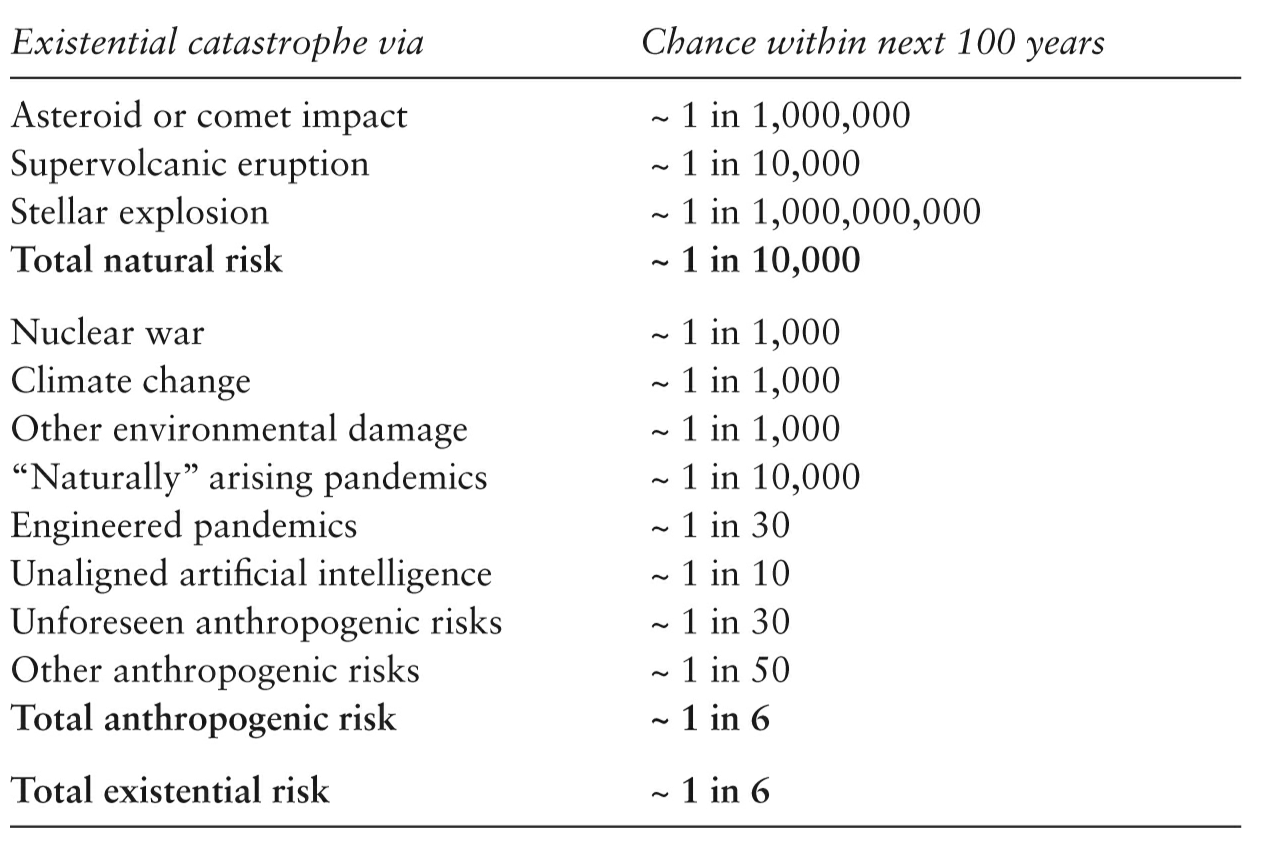

This book is about the risk of "existential catastrophe", meaning humanity either going extinct or suffering some kind of severe permanent limitation, such as losing our technology and losing the ability to ever redevelop it. Ord estimates the risks we face as follows (this is Table 6.1 from page 167):

Plausibility. There seems to be a pattern: the less we know about something, the higher Ord's estimate. So it's tempting to conclude his estimates are alarmist. But considering the highly unusual time we live in, they're worth serious consideration. There's no precedent in history for the level of power that humans have very recently gained through technology.

This book drilled home for me how little confidence we can justifiably derive from the fact that we've survived everything thus far. Nuclear weapons have existed less than 100 years (just 3 or 4 generations!); that's not enough of a track record for us to conclude society has effective restraints against nuclear war. And the nuke-related accidents Ord lists make our governments look pretty amateurish. The lack of escalation in the Cold War may have been a lucky break rather than a good predictor of how future conflicts will go. Threats from biotech are also relatively new and the technology is getting scarier all the time: we're quickly approaching the point where random individuals, not just major labs, may be able to tinker with virus genomes. Plus, as Ord recounts, even major labs have much worse safety records than you'd expect when it comes to handling dangerous pathogens.

AI risk. Based on recent progress in machine learning, there's a good chance that within decades we'll have artificial general intelligence (AGI) - software that can do anything a human can. Even if it's barely as smart as an ordinary human at first, it will have some major advantages over us, including:

These advantages should allow an AGI to quickly become much more powerful than we are. It'll use that power to accomplish whatever goals we programmed it to have. That's scary, because specifying goals that don't have unintended consequences is really hard. Think of all the bugs you've encountered using your phone or computer: the device was doing exactly what it was programmed to, it's just that what the programmer wrote wasn't really what they meant. The worry is that AGI will magnify such unintended consequences to enormous proportions. The classic example from Nick Bostrom is that an innocuous-sounding goal like "make as many paper clips as possible" would, if taken to its logical conclusion, involve wiping out humanity. Of course, a real-world AGI would be designed with a much more complicated set of goals, constraints, and safeguards - but the more complex the design, the more likely it is to contain mistakes.

You can easily think of obstacles that would stand in the way of an AGI takeover. The question is, how confident are we that the AGI wouldn't overcome those obstacles? Even if it's not the most likely outcome, I think Ord makes a good case that it's not wildly unlikely, either:

History already involves examples of individuals with human-level intelligence (Hitler, Stalin, Genghis Khan) scaling up from the power of an individual to a substantial fraction of all global power, as an instrumental goal to achieving what they want. And we saw humanity scaling up from a minor species with less than a million individuals to having decisive control over the future. So we should assume that this is possible for new entities whose intelligence vastly exceeds our own—especially when they have effective immortality due to backup copies and the ability to turn captured money or computers directly into more copies of themselves.

Within the Effective Altruism (EA) community - where Ord is a major figure - the danger posed by AGI has become a prominent, but controversial, concern. One reason it's controversial is that a lot of EA people are software engineers. When someone decides that the most important risk facing humanity in our time happens to be one that falls within the purview of the field that they were personally already interested and skilled in... well, that's a bit suspicious. And both AI risk and the research into mitigating it are speculative, whereas the original focuses of EA were measurable things like health, poverty, and animal welfare. Is it really wise to divert resources away from those immediate issues?

My attitude has usually been that AI risk is real, but small. Research on mitigating it deserves some funding, but only a very tiny amount compared to more well-understood problems. I don't want EA to become synonymous with worrying about AI. I think EA's most important contribution to the world is providing ideas that help people efficiently accomplish whatever altruistic goals they believe in, and I don't want disagreements over which goals should be highest priority to hinder the spread of those ideas.

That's still basically my opinion, but my impression of the size of AI risk and how much mitigation effort it deserves has increased some. For me, it would take a larger leap of faith to believe we won't eventually develop AGI than to believe that we will; and as hard as it is to imagine the exact consequences of that technology, it's even harder to imagine business as usual continuing after its invention. I'm more scared of the economic consequences (what will happen to society when the value of human labor goes to 0?), but ignoring the possibility of accidentally misaligned goals seems reckless too. Given the stakes and the urgency of the problem - this is a potential crisis that we have only a limited time-window to avert - getting a whole lot of software engineers and computer scientists thinking about it seems like a good idea.

Stakes. Both The Precipice and MacAskill's What We Owe the Future (review) spend a lot of time downplaying most existential risks before concluding that addressing them is vitally important. This seems strange but makes sense: the odds are low, but not nearly low enough to be comfortable with when we're talking about the possible end of history. Both books note that the projections which make us think climate change or nuclear war can't truly wipe us out involve a lot of uncertainty and incomplete knowledge. It doesn't seem crazy to account for that uncertainty by using a risk estimate on the order of Ord's 1 in 1000, and that's a larger risk of apocalypse than we should be complacent about.

...assuming that an apocalypse would be a really bad thing, of course. In case you're ambivalent about apocalypses, Ord devotes a chapter to describing how magnificent future civilization ought to be if we don't screw things up, surpassing today's civilization in every desirable quality and achievement to a mind-boggling degree. I agree that's a compelling reason for humanity to stick around. Other reasons he gives seem less compelling: a debt to our ancestors; an obligation "to repair the damage we have done to the Earth's environment"; or an opportunity to eventually save Earth's biosphere from its natural destruction.

What if you think the world is such a nightmare hellscape that extinction would be preferable to any risk of propagating this mess throughout time and space? That conclusion might follow from the philosophical position called negative utilitarianism (NU), which has a small presence in EA circles. It claims suffering is inherently bad and worth avoiding, but happiness is not inherently good or worth seeking (except to the extent it alleviates suffering). Ord addresses this in a footnote:

Even if one were committed to the bleak view that the only things of value were of negative value, that could still give reason to continue on, as humanity might be able to prevent things of negative value elsewhere on the Earth or in other parts of the cosmos where life has arisen.

NU seems clearly false to me anyway, but it doesn't have a monopoly on skepticism about the value of existence. Anti-natalism, another idea that occasionally comes up in EA groups, argues that having children is bad. Sometimes this belief is rooted in NU or other abstract arguments (which I've criticized in a review of Benatar's book on the subject). A more plausible argument for it, to me, is that in practice life tends to contain drastically more suffering than joy overall, so we're subjecting future generations to a lousy deal when we bring them into existence. I'm very much on the fence about whether that premise is true, but if it is, I think Ord's comment above is a good reason to still reject anti-natalism. A great deal of the world's suffering is inflicted by wild animals upon each other as they act out their natural instincts. They have no ability to change their world to be less vicious, and the same process of evolution that created them may be creating similar ecosystems all over the universe. We may have a very rare and precious opportunity to counteract the indifferent cruelty of nature. Ord says this well:

...humans are the only beings we know of that are responsive to moral reasons and moral argument—the beings who can examine the world and decide to do what is best. If we fail, that upward force, that capacity to push toward what is best or what is just, will vanish from the world.

Space. In a sidebar, Ord downplays (without dismissing) the benefits of spreading into space:

Many risks, such as disease, war, tyranny and permanently locking in bad values are correlated across different planets: if they affect one, they are somewhat more likely to affect the others too. A few risks, such as unaligned AGI and vacuum collapse, are almost completely correlated: if they affect one planet, they will likely affect all. And presumably some of the as-yet-undiscovered risks will also be correlated between our settlements.

Space settlement is thus helpful for achieving existential security (by eliminating the uncorrelated risks) but it is by no means sufficient. Becoming a multi-planetary species is an inspirational project—and may be a necessary step in achieving humanity’s potential. But we still need to address the problem of existential risk head-on, by choosing to make safeguarding our longterm potential one of our central priorities.

I'm not sure this gives enough credit to the degree of separation that interstellar distances enforce. Populations separated by many light-years could only interact slowly and would have a much more difficult time exercising power and influence over each other. Some may be able to make themselves literally impossible for others to reach (Ord considers how the expansion of the universe could eventually cause this; I'd add the possibility of some populations living on ships and going on an endless voyage outward). Ord doesn't think that would buy us much:

...these locations cannot repopulate each other, so a 1% risk would on average permanently wipe out 1% of them. ... Even if we only care about having at least one bastion of humanity survive, this isn’t very helpful. For without the ability to repopulate, if there were a sustained one in a thousand chance of extinction of each realm per century, they would all be gone within 5 million years.

But I suspect several of the biggest risks would not be "sustained". Consider the possibility of a totalitarian government using technology to ensure it stays in power forever: that can only happen once. If it happens while we're all on Earth, we're all stuck with that particular government forever. If it happens after we've split into (as Ord puts it) "independent realms", then even if each realm suffers a totalitarian takeover sooner or later, they'd all be different governments, and some may be dramatically better or worse than others - presumably a lot depends on just who happens to be in the right place at the right time when permanent takeover becomes feasible.

More generally: because Ord believes "there is substantially more transition risk than state risk" - i.e., our biggest threats come from temporary transition periods caused by technological advancement - I would think there's enormous value in trying to split humanity up before we go through those transitions. That way, if there's some randomness involved in whether a transition goes well or poorly, we'll have lots of independent chances for it to go well.

But I suppose since settling space requires a lot of technological development, maybe it's just not possible to achieve it prior to triggering many of the dangerous transitions. Also, if there are means by which a population in one corner of the universe could wipe out all populations throughout the universe, then I guess spreading ourselves out could actually make such a catastrophe more likely to happen. Simply having more people increases the odds that someone, somewhere, will both want to do destroy everyone and figure out how. So...... that sucks. I'm really hoping the no-going-faster-than-light rule turns out to be every bit as firm as physicists say.

Action. I appreciate that Ord includes (in Appendix F) a list of concrete suggestions for how to work on each risk. Some are research directions; others are daunting political projects. It's easy to be cynical about problems that need international cooperation, but Ord reminds us not to be fatalistic:

...consider the creation of the United Nations. This was part of a massive reordering of the international order in response to the tragedy of the Second World War. The destruction of humanity’s entire potential would be so much worse than the Second World War that a reordering of international institutions of a similar scale may be entirely justified. And while there might not be much appetite now, there may be in the near future if a risk increases to the point where it looms very large in the public consciousness, or if there is a global catastrophe that acts as a warning shot. So we should be open to blue-sky thinking about ideal international institutions, while at the same time considering smaller changes to the existing set.